ISO/IEC 42001: The Future of Responsible AI Governance

Introduction

Unclear AI rules create repeat work, stalled launches and avoidable incidents. ISO/IEC 42001 fixes this by standardizing how you scope AI use, assess risk, test models and monitor outcomes with human oversight.

Adopting 42001 lifts AI from ad hoc practices to a documented system where roles are clear, data lineage is known and decisions are explainable. That builds confidence with customers and regulators and shortens security reviews in sales cycles.

AI now powers search, fraud prevention and life-critical decisions in healthcare and finance. Trust in these systems depends on clear rules for data use, testing and oversight. ISO/IEC 42001 gives institutions a practical way to run AI like a managed system instead of a collection of experiments, so leaders can reduce risk, win enterprise deals and meet rising legal expectations without slowing product delivery.

Quick summary

ISO/IEC 42001 sets out an AI management system that connects policy, risk assessment, model lifecycle controls and monitoring. It pairs well with ISO/IEC 27001 for information security and ISO 9001 for quality management. Institutions use 42001 to align with buyer due diligence, improve audit readiness and track results with KPIs like bias findings closed, model review cadence, incident response time and SLA uptime for AI services.

Assess your readiness to align with ISO/IEC 42001 requirements: Look at leadership roles, policies, risk assessment methods, and accountability structures that support responsible AI use.

Why ISO/IEC 42001 is rising now?

The adoption of ISO/IEC 42001 is accelerating because the risks and expectations surrounding AI have moved from theory into daily business reality. Institutions no longer use AI only for research or small pilots; AI now drives decisions in finance, healthcare, hiring, security and national infrastructure. Buyers, regulators and end users all want assurance that these systems are safe, transparent and accountable.

Enterprise clients, in particular, now expect vendors to show structured AI governance before signing contracts. They want proof that AI models have been tested for bias, validated for accuracy, and are monitored for drift or misuse. Without that assurance, sales cycles are delayed, contracts are lost and reputational risks grow. ISO/IEC 42001 provides a single, globally recognized framework to satisfy these demands and to streamline due diligence.

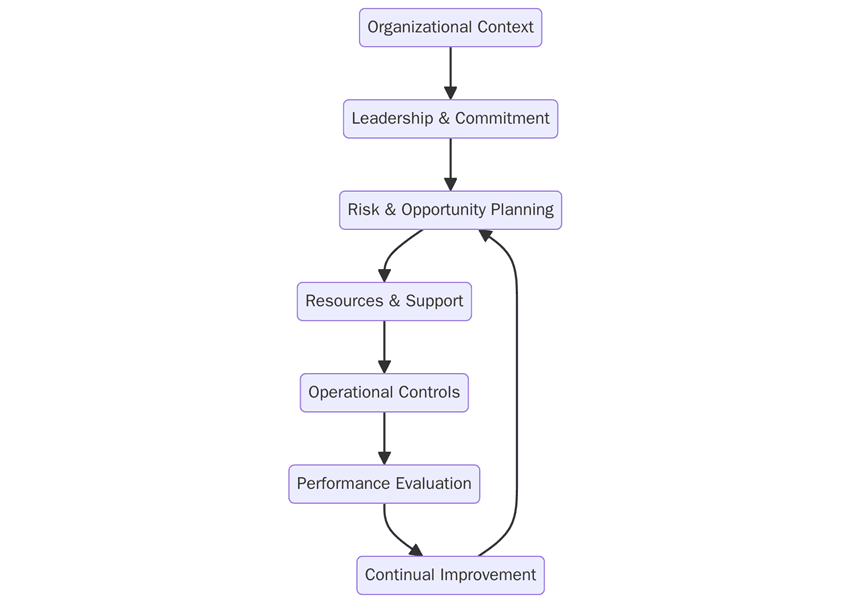

What are the requirements for ISO/IEC 42001?

To achieve certification, institutions must implement structured systems that ensure AI development and deployment are transparent, accountable and aligned with global expectations. These requirements cover everything from governance policies to technical controls and ongoing monitoring. Below are the key requirements:

Define scope for AI uses, products and locations with clear boundaries

Publish AI policies on transparency, safety, accountability and human oversight

Conduct risk assessments for bias, misuse, safety impact and data issues

Document processes for data sourcing, labelling, consent and retention

Establish model controls for training, validation, versioning and approvals

Provide evidence records such as data lineage logs, model cards and audit trails

Set monitoring for drift, performance, bias and incident handling

Train staff on roles across product, data, ML and compliance

Run internal audits on the AI management system and close findings

Leadership reviews of objectives, risks and performance indicators

Correct nonconformities and keep improving as laws and models evolve

How to prepare for ISO/IEC 42001?

Preparation for ISO/IEC 42001 involves aligning existing practices with certification requirements and building evidence to show auditors that governance is operational, not just documented. The process should engage leadership, technical teams and compliance staff. Key preparation steps include:

Run a gap analysis against 42001 and map overlaps with 27001 and 9001

Align policies for AI ethics, transparency and security across teams

Define scope starting with high-risk or flagship AI products

Build documentation for data flows, model cards, testing results and approvals

Implement controls for bias testing, explainability, rollback and incident response

Pilot internal audits and fix issues before the external assessment

Set KPIs and SLAs for bias closure time, model review cadence, uptime and MTTR

Certification audit

The certification audit is a structured process conducted in two stages, followed by ongoing surveillance and recertification. It ensures that AI governance policies are both documented and operational across systems. The audit flow is as follows:

Stage 1 audit: Reviews documented scope, policies, risk assessments and evidence of AI lifecycle controls.

Stage 2 audit: Evaluates implementation across products, data pipelines and operations with interviews and samples.

Nonconformities: Must be corrected with documented proof before approval.

Management review: Confirms leadership oversight and resources for ongoing governance.

Final certification: Awarded after all gaps are resolved.

Surveillance audits: Conducted annually to verify controls remain in place and effective.

Recertification audits: Required every three years to maintain market validity.

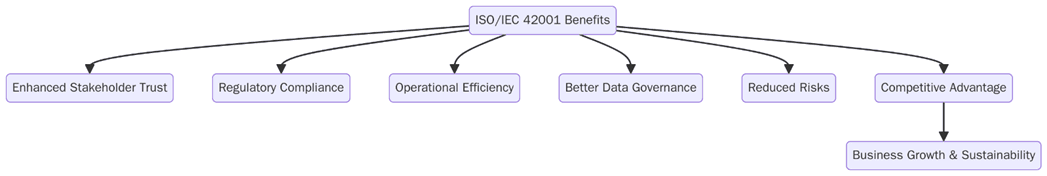

What are the benefits of ISO/IEC 42001?

Certification turns AI governance into a repeatable system buyers can trust. It reduces go-to-market friction, supports legal compliance and improves incident readiness. Institutions track progress with KPIs like bias incident rate, drift alerts handled, audit closure time and SLA uptime for AI services. The main benefits include:

Buyer confidence through audited AI controls

Faster sales cycles with standard evidence for due diligence

Lower risk of bias, misuse and safety failures

Clear accountability across data, ML and product teams

Better documentation for privacy and security reviews

Scalable governance as AI use grows across the portfolio

Institutions are pairing ISO/IEC 42001 with ISO/IEC 27001 and ISO 22301 to create one integrated system for security, AI governance and continuity. Vendor management is in scope now, with SLAs that cover data access, model update cadence, explainability reports and security posture. Teams publish dashboards for bias testing frequency, incident response time, model rollback rate and audit closure periods so customers see real outcomes, not just a certificate.

How can Pacific Certifications help?

Pacific Certifications, accredited by ABIS, certifies ISO/IEC 42001 for AI-driven institutions in every sector. We help you define scope, close gaps and prepare evidence that satisfies enterprise buyers and regulators.

Request your ISO audit plan and fee estimate, we will help you map Stage-1/Stage-2 timelines and evidence requirements for your institution.

Contact Us

Contact us at support@pacificcert.com or visit www.pacificcert.com.

Author: Alina Ansari

Read more: Pacific Blogs